“Most enterprises are not failing at AI because of technology; they’re failing because of operating model gaps.”

That thought stayed with me after a recent NASSCOM roundtable on accelerating enterprise AI adoption. I had walked in expecting a conversation around models, tools, and benchmarks. I walked out with a very different observation.

Across industries, across scales, and across maturity levels, the pattern seems to be consistent. The gap between an impressive pilot and durable business value isn’t a technology problem. It’s an operating model problem.

At InfoVision, we see this every day in our conversations with clients. That is the lens I want to share here – not as theory, but on the basis of what we’ve learned building and delivering AI at scale for enterprises across Retail, BFSI, healthcare and technology.

Beyond the Pilot

Almost every enterprise AI program I encounter starts the same way: excitement, experimentation, a handful of promising POCs. Very few cross the chasm to scaled, measurable value.

The maturity curve, as I’ve come to see it, runs something like this:

The most important shift in that journey is the move from a System of Work, where AI simply executes tasks, to a System of Context, where AI understands the business nuance in which it operates: the processes, the policies, the customer, the risk posture, the institutional memory. A chatbot that can answer questions is a capability. An AI that knows how your organization actually works is a strategic asset.

A System of Context is an AI implementation that understands the business it operates in rather than simply executing generic tasks. A couple of parameters to understand the business would be the processes, policies, customers, risk posture, and institutional memory. Moving to a System of Context is what separates enterprise AI programs that stay stuck in pilot from those that scale into durable business value.

Why do 95% of AI Initiatives Never Scale?

The most common failure mode I see is deceptively simple: there is no clear end goal. Teams jump into AI without first defining what success looks like, how ROI will be measured, or what “scale” actually means for the problem they are solving.

Without that clarity, even the best pilots remain pilots. Budget gets spent, demos get built, and the organization learns a lot; however, nothing meaningful ends up in production. Clarity of outcomes is the single highest-leverage input to an AI program, and it is almost always the cheapest to get right.

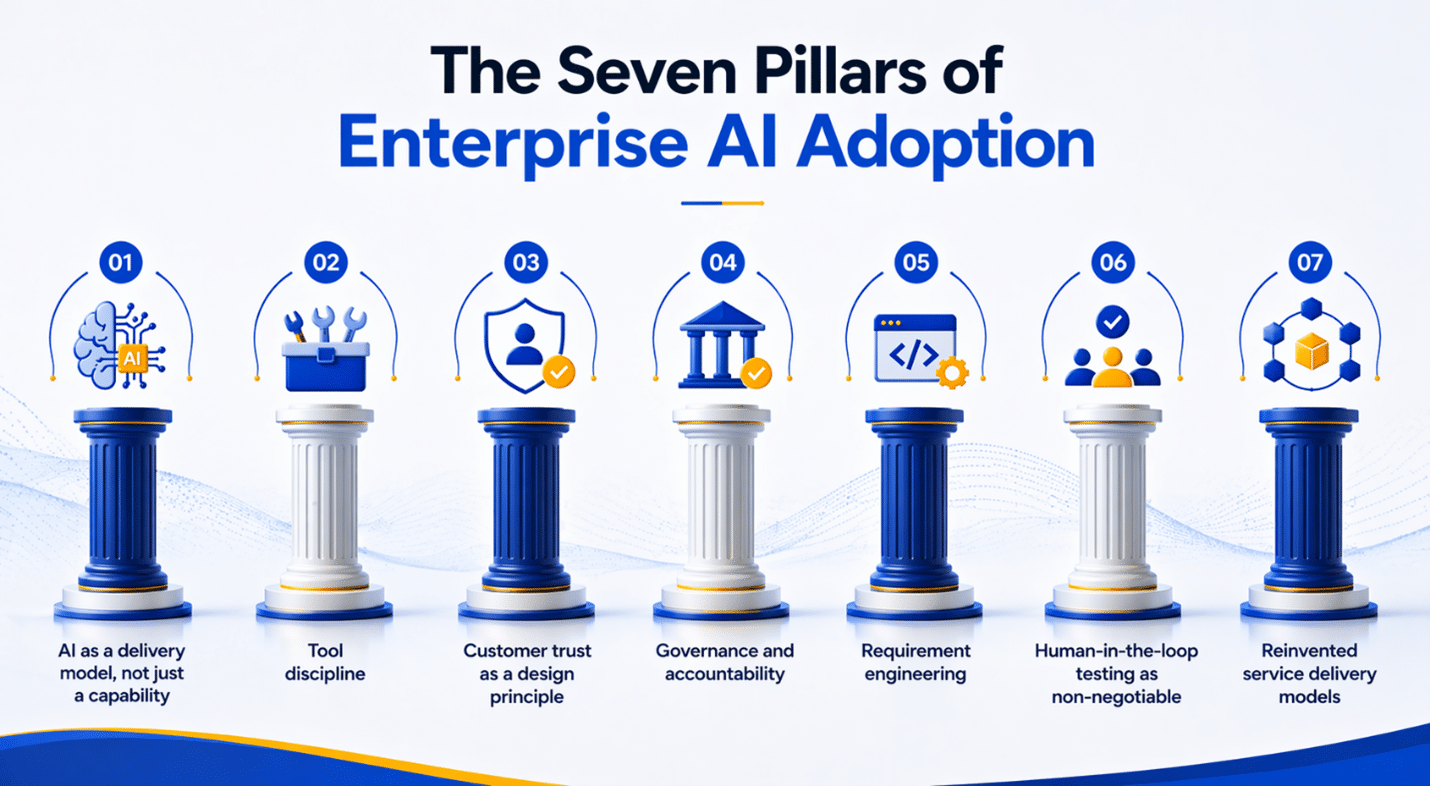

The Seven Pillars of Enterprise AI Adoption

From the hundreds of conversations our delivery and consulting teams have had with enterprise leaders, a consistent set of foundations has emerged. I think of them as the seven pillars of serious AI adoption:

- AI as a delivery model, not just a capability. AI must reshape how work gets done, not sit alongside it as a feature.

- Tool discipline. Fewer tools, used exceptionally well, beat a sprawling toolchain every time.

- Customer trust as a design principle. Trust is not a compliance checkbox but an architectural decision.

- Governance and accountability. Clear ownership of models, data, and outcomes across the lifecycle.

- Requirement engineering. Prompting, context design, and automation are the new SDLC skills.

- Human-in-the-loop testing as non-negotiable. Oversight is not friction; it is the thing that lets you scale responsibly.

- Reinvented service delivery models. Pods, pricing, and SLAs all have to change. Otherwise, AI just subsidises old ways of working.

In short, this is the point: enterprise AI is not a technology transformation. It is an operating model transformation.

Where AI Actually Creates Value

For all the discussion around AI, the business conversation is surprisingly limited. The value almost always shows up in four places: engineering productivity, revenue differentiation & customer retention, outcome-based delivery improvements, and clearer trade-offs between traditional and AI-driven ways of working.

At InfoVision, one of the practical ways we de-risk this for clients is through what we call the Parallel Pod Model — roughly 30% AI-first delivery running alongside 70% traditional delivery for the same program. The split is not dogma; it is a structure. It lets our clients compare apples to apples, generate real performance data, and scale what works. We’ve seen this model cut through months of “will it work in our context” debates by producing evidence inside a single release cycle.

Underpinning this is our AI Center of Excellence and a growing library of platform accelerators – reusable assets for document intelligence, RAG-based knowledge systems, OCR-driven data extraction, and domain-specific evaluation harnesses. These accelerators exist for one reason: to compress the distance between a promising idea and a production-grade outcome. The enterprises that win with AI are the ones that don’t rebuild the same plumbing on every engagement.

Data extraction, retrieval-augmented generation, and OCR are no longer novelties – they are the competitive differentiators of the next five years.

Seven Checks Before You Go to Production

Before any AI workload moves from pilot to production, we ask our delivery teams and clients to stress-test against seven checks. None of them are glamorous. All of them are non-negotiable:

- Security embedded early — not bolted after launch.

- Regulatory alignment from day one, especially in regulated industries.

- Clear ownership between pilot teams and production teams.

- An MVP tied to a specific business outcome, not to a technology demo.

- A deliberate integration strategy with existing systems and data.

- Defined KPIs, agreed with the business before the build starts.

- Clarity on the end goal — what “done” looks like, and how we’ll know.

And sitting above all seven: human-in-the-loop governance. That is how you scale AI in an enterprise context without scaling risk at the same rate.

At InfoVision, we stress-test every AI workload against seven checks before it goes live: security embedded from day one, regulatory alignment, clear ownership between pilot and production teams, an MVP tied to a specific business outcome, a deliberate integration strategy with existing systems and data, agreed KPIs, and unambiguous clarity on the end goal. Sitting above all seven is human-in-the-loop governance, a mechanism that lets enterprises scale AI without scaling risk at the same rate.

The Real Transformation

If I had to distil what I’ve learned, from our own AI CoE, from our Parallel Pod engagements, and from conversations like the Nasscom roundtable, it would be this:

AI transformation is not about doing more with AI. It is about redefining how value is created and delivered.

Trust, governance, and clarity of outcomes are the three inputs that compound. Human oversight is the enabler of scale, not the thing slowing you down. And tool overload, left unchecked, will quietly eat your productivity gains before you ever see them.

At InfoVision, we are choosing to build that discipline into our delivery model, our accelerators, and the way we engage with our clients. It is, in every sense, how we are adopting AI ourselves – by changing how we deliver, not just what we deliver.

The most honest conversations I’ve had about AI aren’t about what’s possible, they’re about what’s actually working, and what quietly isn’t.