The banking industry is on the cusp of digitalized revolution spearheaded by 5G technology, the fifth generation of mobile communication. As per Grand View Research, the global digital payment market size was valued at $81.03 billion in 2022 and is expected to expand at a compound annual growth rate (CAGR) of 20.8% from 2023 to 2030. This surge in growth can be largely attributed to the widespread adoption of the 5G network across the globe, which is predicted to encompass approximately one-third of the global population by 2025.

As the 5G technology promises much faster internet speeds, lower latency, greater bandwidth, and the capacity to connect billions of devices, a world of possibilities for the financial sector is yet to be unveiled. From ATMs to online and mobile banking, the financial services industry has been an early adopter of digital technology.

With the integration of 5G in banking, an unparalleled customer experience awaits, particularly in the realms of digital banking and payments. This transformative technology has the potential to enhance fraud prevention measures, thus making transactions more secure and reliable.

5G in banking industry

5G in banking holds the potential not only to revolutionize the digital payment sector but also to enhance the overall banking experience for customers. By leveraging high-speed connectivity, 5G in financial services can streamline digital communications and payments, leading to improved quality in digital banking services. Here are a few ways in which 5G technology is set to transform digital banking:

Transforming payment sector

The financial sector can leverage 5G technology’s high-speed connectivity to perform more complex processes quickly, significantly reducing the waiting period for things such as ID verification for new customer onboarding and loan tracking. The quality of contactless payment services can be improved, with the potential for expansion into more sophisticated channels, including wearable devices, IoT devices, and even virtual reality (VR) and augmented reality (AR) technologies.

Improve service offerings

The 5G in banking will allow the processing of more complex transactions, such as auto loans and mortgages, with reduced costs. The enhanced connectivity provided by 5G will result in improved performance of existing banking applications and websites, offering customers a seamless and efficient digital banking experience. Furthermore, upgrading ATMs and kiosks to 5G technology will enable faster service by giving customers quick access to their money.

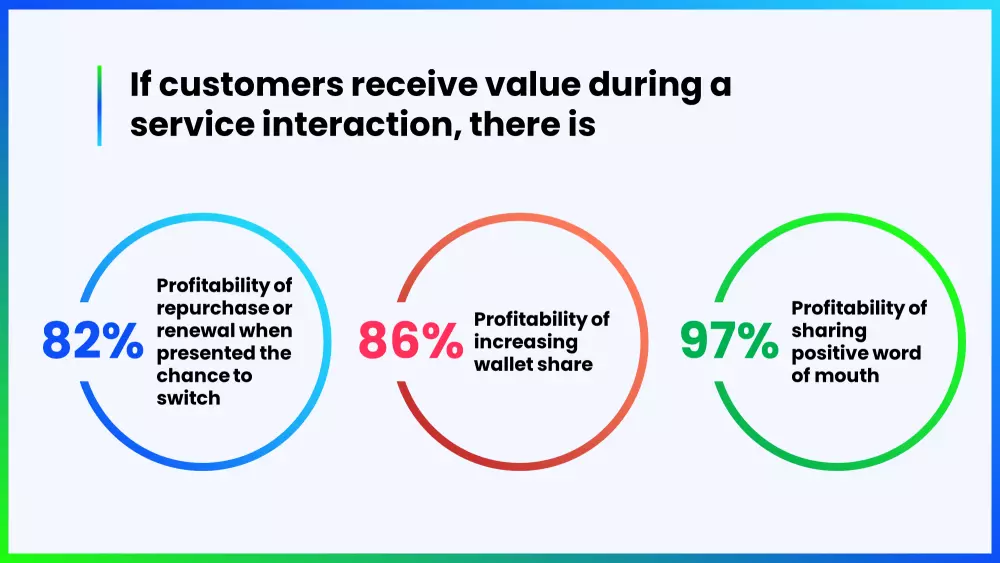

Improving Customer Experience

The adoption of 5G in banking will significantly improve customer experiences, as it provides access to a wide range of personalized services. 5G technology combined with edge computing will reduce network latency, therefore delivering better services for banking customers.

Fraud prevention

5G technology can help prevent fraud in the BFSI sector by facilitating faster data sharing and simplifying mobile and digital payment solutions. 5G in digital payments will eliminate any related performance issues related to wearables and IoT-connected devices. Furthermore, 5G technology enhances the security of online payments by facilitating the real-time transmission of a larger volume of data across networks.

Banking for everyone

The robust connectivity provided by 5G in banking allows customers to easily access their bank accounts and utilize a wide range of banking services. However, despite the significant benefits, the BFSI sector faces several challenges during the implementation of digital transformation. These challenges include:

- Evolving away from legacy applications:The existence of certain legacy systems within the banking sector isn’t conducive to the digitalization of banking services. Many major banking systems, for example, are built using the COBOL programming language, which has been around for over 60 years.

- Solving security issues at scale:In addition to ensuring the protection of social communication channels, banks currently encounter a significant challenge in safeguarding their IT infrastructure and all other associated data.

- Securing social media communications:Social media is expected to be one of the primary sources of communication for customers. Accelerating client communications on social media presents an array of digital banking challenges, including security and compliance.

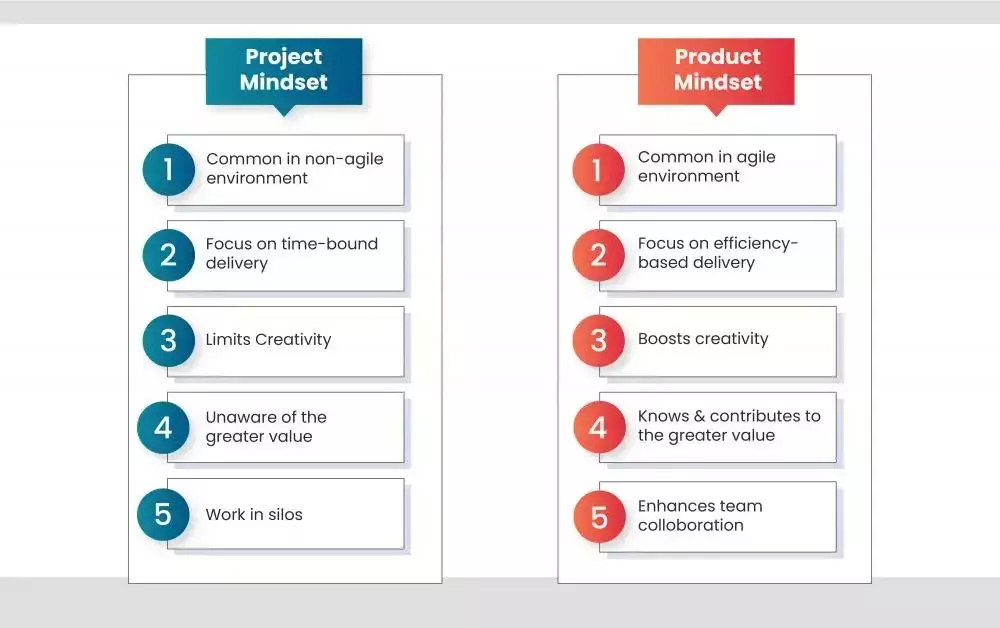

- Breaking down silos and mitigating risks:The siloed nature of banks has resulted in stunted growth, limited scalability and has also reduced the level of customer satisfaction.

- Choosing between bricks-and-mortar and digital:Banking service providers must realize that customers’ persistent need to visit physical branches is one of the challenges in implementing digital banking.

To enable digital transformation in banking, the industry needs to embrace mobility, stay up to date with digital innovations, enable automation, implement smart digital solutions and allow multichannel services and human interactions.

5G in digital payments

According to projections, the global revenue from digital payments is expected to reach $14.79 trillion by 2027. This significant growth can be attributed to several factors, including the widespread adoption of smartphones, the increase in e-commerce sales, and the surge in internet activities worldwide. Looking ahead, the landscape of digital payments is poised for continued expansion, fundamentally transforming the way individuals and businesses conduct financial transactions on a global scale.

With the advent of 5G technology, digital payments are poised to become increasingly appealing to both consumers and merchants. The faster and more seamless payment options offered by 5G technology will contribute to the greater adoption of digital payment methods. As 5G technology becomes more prevalent, it has become crucial to transition towards digital payments due to the numerous advantages they offer.

Advantages of digital payments

- Convenience:One of the most significant advantages of digital payment is the seamless experience they provide to customers. With reduced dependency on cash, quicker transfer, and effortless transactions, digital payments have become the preferred choice.

- Security:The process of handling and managing cash payments can be burdensome and tiresome. Most digital payment platforms offer customers regular updates, notifications, and statements to easily track their funds.

- Faster Transactions:Digital payments enable you to expedite the checkout process and receive payment almost instantly. Whether in-store or online, digital payment methods only involve a simple tap or swipe, which simplifies the transaction.

- Less manual work:Digital payments technology reduces manual work by unifying the entire process into a single automated workflow.

Conclusion

As financial institutions increasingly collaborate with fintech companies to offer customers seamless and interconnected banking experiences, the advent of 5G technology is expected to create a favorable environment for such advancements. With the integration of 5G technology into digital payment applications, the future of banking is just starting to take shape.

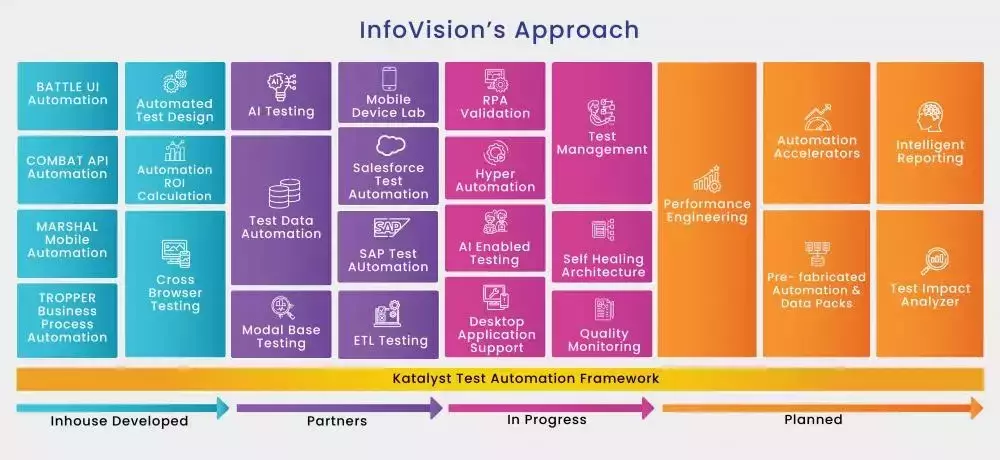

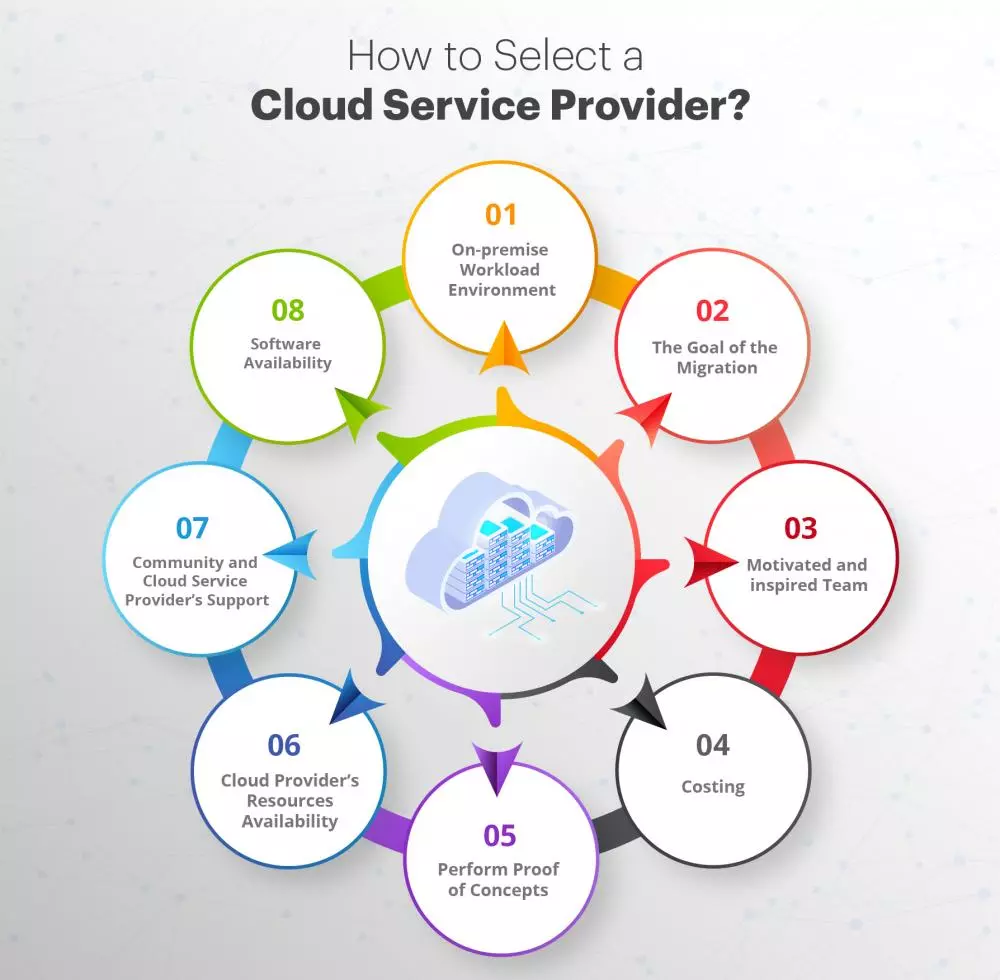

In today’s uncertain landscape, banking and financial services organizations need a strategic approach that prioritizes customer experience, operational excellence, and unique competitive advantages driven by digital solutions. InfoVision offers a comprehensive range of emerging technologies, cloud expertise and an innovation ecosystem to assist these organizations in transforming their operations.

We engage in extensive partnerships to implement, secure and scale continuous KYC and risk segmentation practices, which contribute to a comprehensive analysis of customer behavior. Our customers have successfully utilized these approaches and our capabilities to execute them, resulting in enhanced security and improved customer satisfaction simultaneously.

By exploring InfoVision’s wide array of offerings, one can harness the disruptive opportunities presented by the digital economy, which include:

- Faster cross-border payments

- Open banking

- Digitalized payments

- Mobile banking platform

- Digital wallet

- Shared loyalty reward program

- Commercial banking

- Core banking upgrades

- Intelligent operations

- AI-driven fraud analytics

- Cloud readiness

Together, let’s tap into the potential of digital transformations and maximize our success in the ever-evolving digital landscape.